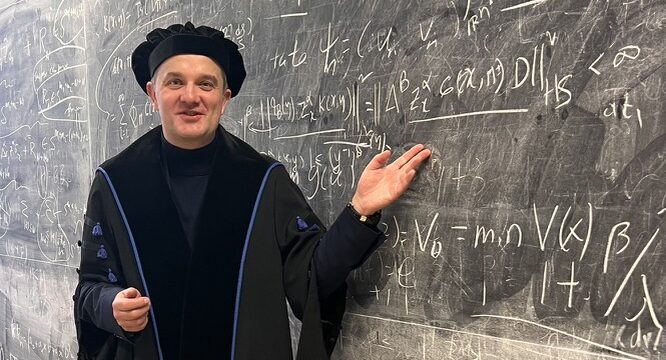

Artificial intelligence is no longer a technology of the future, but an everyday working tool. However, the question remains how to use AI in a way that supports research and teaching without losing one’s own contribution and critical thinking. We discussed this with TalTech researcher Sven Nõmm.

How have you already used artificial intelligence in your work, or where do you see AI helping you the most in the future?

In teaching, I limit the use of third-party tools. So far, the assessment of all student assignments has been carried out without the use of artificial intelligence. However, in my courses I teach how to develop and program systems of artificial intelligence tools. In my own research, I have also developed some AI tools myself. The output of these tools provides me with results that I publish.

Recently, I have also focused on AI theory and innovative techniques in this field. I use text correction tools and code generation in areas where I need to format output results or create high-quality graphs.

Recently, I have also started using tools that help find and map articles for literature reviews. In administrative work, I use AI translation tools only to avoid writing the same text twice in different languages. Time will tell whether I can maintain these proportions or whether I will need to find a new balance in using and not using AI-based tools.

What has been your biggest “aha moment” related to artificial intelligence over the past year?

Just before last Christmas, I was working with high-dimensional sensors and discovered that my code did not work on certain computer architectures. At first, I thought it was a memory issue, but I decided to verify my suspicion using the ChatGPT EDU service provided by the university. I was surprised by the reasoning capability of the latest version. With the help of AI, I understood that the problem was caused by different hardware architectures, and the AI was able to suggest alternative code that does not depend on the GPU type.

Recently, one of my doctoral students showed me how he integrated another popular AI-based tool into his development workflow. Taken separately, it was not an aha moment, but when I combine this observation with the earlier aha moment and with what has changed recently, the progress has been enormous. I do not ask anyone what the situation will be in five years. The question is rather what will happen in the field of artificial intelligence within one year. Somewhat reassuring is that I still see researchers paying a great deal of attention to the fundamentals of machine learning.

What is the most important principle or boundary you adhere to when using artificial intelligence?

The outcome of my work should be my own contribution. The initial version of any text is always written by me. The main (novel) components of the code are also created by me, and I read all the articles I cite. I believe that the same principle should apply to the use of AI for all purposes. When using artificial intelligence, a person must be able to maintain critical thinking and ensure that the completed work, assignment, or decision ultimately remains their own and that autonomy is not fully handed over to AI.

In your opinion, what should a university definitely do well in the age of AI to support both people and quality?

I believe that, in terms of AI support, our university is doing more than enough. It provides infrastructure (both at the hardware and software level), organizes seminars, and offers guidelines and training. AI is a rapidly developing field, and some of the tools we use today may be outdated in a year, but the system of seminars, AI champions—those leading the implementation of this technology—and training will remain and function as a workflow through which information is shared, experiences are exchanged, and opportunities are provided to support university members in keeping up with these changes. Where we have shortcomings is in awareness of the risks associated with delegating too many tasks to AI.

Students should be aware that the lack of repetitive practice negatively affects their studies. It must also be ensured that students understand and actually acquire the knowledge and skills for which they chose to study a particular field. This is where both our university and most educational institutions worldwide are currently facing a challenge that requires serious attention. And of course, we must preserve at least one non-AI study track for those who prefer a more classical approach to education and research.

I am pleased that AI-FTK has contributed to creating this knowledge-sharing ecosystem. Undoubtedly, 2025 was an interesting year. The responsibilities of the focus top center were adjusted, the seminars gained some popularity, the first international contacts emerged, and the annual seminar grew and connected our university with the public sector. Naturally, managing this new structure at the university brings some challenges. Let us see where this year will take us.